|

Rendered work_orders/ within layouts/application (10.0ms)Ĭompleted 200 OK in 741ms (Views: 58.0ms | ActiveRecord: 79.

WorkOrder Load (9.0ms) SELECT `work_orders`.* FROM `work_orders` WHERE (`work_orders`.`id` = 6) LIMIT 1 User Load (19.0ms) SELECT `users`.* FROM `users` WHERE (`users`.`id` = 1) LIMIT 1 WorkOrder Load (4.0ms) SELECT `work_orders`.* FROM `work_orders` WHERE (`work_orders`.`id` = 6) LIMIT 1ĮDIT: I removed everything from the show action to just def = WorkOrder.find_by_id(params)Īnd now I get a 200 but the Page still renders blank Started GET "/work_orders/6.pdf" for 127.0.0.1 at 17:15:26 -0400 User Load (3.0ms) SELECT `users`.* FROM `users` WHERE (`users`.`id` = 1) LIMIT 1 Processing by WorkOrdersController#show as HTML So, with your pdf object, getNumPages (), iterate with if getPage (i).getContents ():, collecting the hits into a list of page numbers to output.

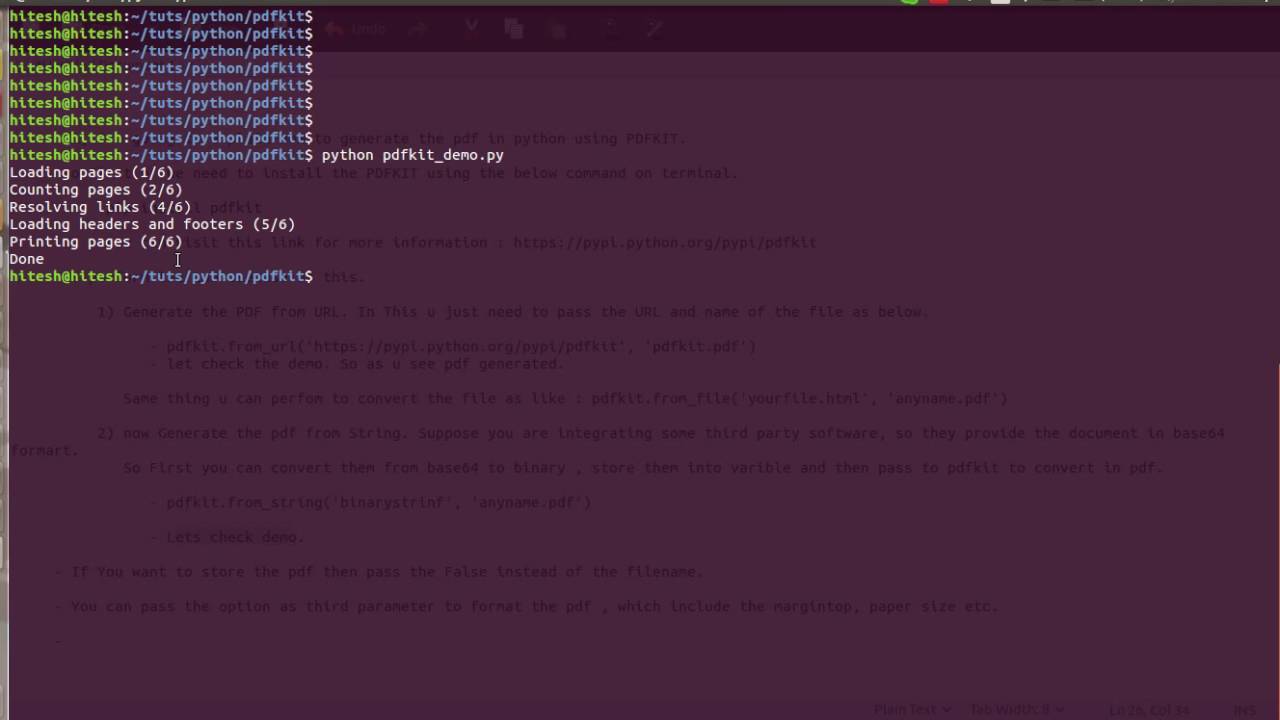

Mime::Type.register "application/pdf", :pdfĪnd with all that I get a blank Pdf sent to the browser and the log output says this Started GET "/work_orders/6.pdf" for 127.0.0.1 at 15:51:31 -0400 PdfFileReader has a method, getPage (self, page number) that returns an object, PageObject, that in turn has a method getContents, which will return None if the page is blank. If !((Config::CONFIG =~ /mswin|mingw/).nil?)#if windows environment this is the path to wkhtmltopdf otherwise use gem binariesĬonfig.wkhtmltopdf = "C:/Program\ Files\ (x86)/wkhtmltopdf/wkhtmltopdf.exe"įormat.html if request.xhr? (Which is a PITA, yeah, I should know, I was one of those before).Windows 7 with wkhtmltopdf installed #/config/initializers/pdfkit.rb decode().ĭo note however that it should not be assumed that other developers are responsible enough to make sure the header and/or meta character set declarations match the actual content. It's either in one of the Meta tags or in the ContentType header in the response. So it's kind of useless to have (html) as html is not the encoded string from html.encode (if that is what you were originally aiming for).Īs Ignacio suggested, check the source webpage for the actual encoding of the returned string from read(). pdf, you will need to also configure the PDFKit middleware. encode() string method returns the modified string and does not modify the source in place. If you want PDFKit to handle everything automatically whenever the url ends in. For quickly archiving a web page these HTML file converters will serve you with the basic functions either from HTML or an URL. Its functions go way beyond online tools that save HTML pages as PDF. urlopen().read() to what applies to the content you retrieved.Īnother problem I see there is that the. PDFreactor is the perfect printing component to convert HTML to PDF files in a high-quality way. I got this from chardet and it had 0.5 confidence that it is right! (well, as given with a 1-character-length string, what do you expect) You should change that to the encoding of the byte string returned from. Do note that "windows-1252" is something I used as an example. An app may be able to capture a users screen. While: html = '\xa0'decoded_str = code("windows-1252")encoded_str = decoded_str.encode("utf8") This issue is fixed in Security Update 2022-004 Catalina, watchOS 8.6, macOS Monterey 12.4, macOS Big Sur 11.6.6. So you might try to decode it first as in html = urllib.urlopen(link).read()unicode_str = code()encoded_str = unicode_str.encode("utf8")Īs an example: html = '\xa0'encoded_str = html.encode("utf8")įails with UnicodeDecodeError: 'ascii' codec can't decode byte 0xa0 in position 0: ordinal not in range(128) In addition, we usually encounter this problem here when we are trying to. Original Answer from 2010:Ĭan we get the actual value used for link?

After that, the gzipped file can be read into bytes again and decoded to normally readable text in the end. The gzip module then reads the buffer using the GZipFile function. This code reads the response, and places the bytes in a buffer. Then you can parse the content out like this: response = urlopen("")buffer = io.BytesIO(response.read()) # Use StringIO.StringIO(response.read()) in Python 2gzipped_file = gzip.GzipFile(fileobj=buffer)decoded = gzipped_file.read()content = code("utf-8") # Replace utf-8 with the source encoding of your requested resource Note: In Python 2 you'd use StringIO instead of io In order to decode a gzpipped response you need to add the following modules (in Python 3): import gzipimport io UnicodeDecodeError: 'utf8' codec can't decode byte 0x8b in position 1: unexpected code byte

As of February 2018, using compressions like gzip has become quite popular (around 73% of all websites use it, including large sites like Google, YouTube, Yahoo, Wikipedia, Reddit, Stack Overflow and Stack Exchange Network sites).If you do a simple decode like in the original answer with a gzipped response, you'll get an error like or similar to this:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed